Psi*

Tech Monkey

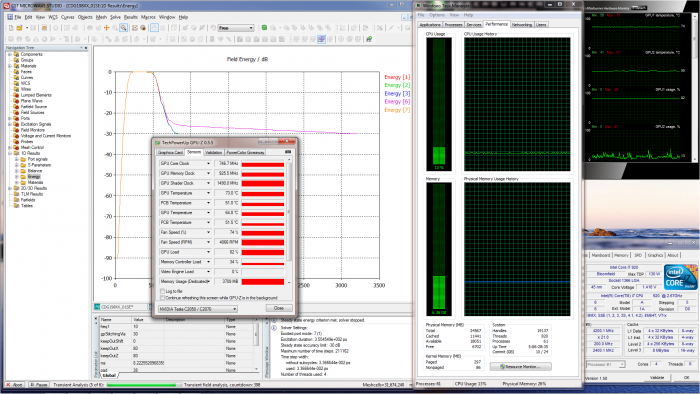

I have a Tesla C2070 for double precision number crunching. Paid a ~$2200 for it from a legit company and it is at least 5X faster than the i7-990X OC-ed at 4.5GHz. Nice. I am happy.

Nice. I am happy.

But in my what used to be rare to never ventures on eBay I am starting to see these new Tesla M2090s show up and for <<$2K. This card has 512 CUDA cores versus 448 in the C2070 and my software guys tell me to expect a 20 % improvement.

Background in a nut shell ... did I forget to mention that I OC the C2070 by 34%? I am using an MSI utility for their Nvidia cards to handle the OC & fan control. When the C2070 was initially installed (virgin like), the card's temp was around 83 deg C at idle. Confused, concerned, & frustrated I pulled the card out & parked it for a few weeks until I could get more info. Nvidia does offer a utility for setting the Core, Shader, & Memory clocks as well as controlling the on board fan ... but I could not get it to work at all or at least "remember" the settings. I have no recollection of why I decided to even try the MSI utility, but it was trivial to use. So easy that I haven't even tried to find an alternative much less go back to the Nvidia utility. And at idle, the fan keeps it about 58 deg C. When crunching it speeds up as necessary per the card temp. Just like you would want, what the heck?

Back to the M2090. This thing does not have an integrated fan. If you follow that link above, the pic of the card with a large heat pipe heatsink is the only current offering. So while I wait for it to show up, I am thinking about what could be done. I cannot find any kind of picture or board layout (w/o the heatsink) to get an idea before it shows up. Since most people are probably buying these things for >$4K and probably not their money, they may be a bit hesitant to dig into it much. Me, any way to gets things done faster I am all over.

I will be posting pics of the card when it shows up. I suspect that I will pull the heatsink pretty quickly & am hoping that some GeForce GTX 580 cooling solution would fit right on. Maybe someone has WC-ed a GTX 580 & would sell the stock cooler!

Stay tuned ... pics sometime soon.

But in my what used to be rare to never ventures on eBay I am starting to see these new Tesla M2090s show up and for <<$2K. This card has 512 CUDA cores versus 448 in the C2070 and my software guys tell me to expect a 20 % improvement.

Background in a nut shell ... did I forget to mention that I OC the C2070 by 34%? I am using an MSI utility for their Nvidia cards to handle the OC & fan control. When the C2070 was initially installed (virgin like), the card's temp was around 83 deg C at idle. Confused, concerned, & frustrated I pulled the card out & parked it for a few weeks until I could get more info. Nvidia does offer a utility for setting the Core, Shader, & Memory clocks as well as controlling the on board fan ... but I could not get it to work at all or at least "remember" the settings. I have no recollection of why I decided to even try the MSI utility, but it was trivial to use. So easy that I haven't even tried to find an alternative much less go back to the Nvidia utility. And at idle, the fan keeps it about 58 deg C. When crunching it speeds up as necessary per the card temp. Just like you would want, what the heck?

Back to the M2090. This thing does not have an integrated fan. If you follow that link above, the pic of the card with a large heat pipe heatsink is the only current offering. So while I wait for it to show up, I am thinking about what could be done. I cannot find any kind of picture or board layout (w/o the heatsink) to get an idea before it shows up. Since most people are probably buying these things for >$4K and probably not their money, they may be a bit hesitant to dig into it much. Me, any way to gets things done faster I am all over.

I will be posting pics of the card when it shows up. I suspect that I will pull the heatsink pretty quickly & am hoping that some GeForce GTX 580 cooling solution would fit right on. Maybe someone has WC-ed a GTX 580 & would sell the stock cooler!

Stay tuned ... pics sometime soon.