You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Tesla M2090

- Thread starter Psi*

- Start date

atypicalguy

Obliviot

Looks like the server setup is just some little fans on one end that blow longitudinally through the fins. So put a plate over the fins that extends beyond the end of the card, and mount some fans on that with glue or whatever. Here is the server setup:

http://www.supermicro.com/products/system/1U/5017/SYS-5017GR-TF.cfm

Seems like two of these should work:

http://www.aliexpress.com/store/pro...-Server-Fan-Cooling-Fan/802974_591893376.html

Not sure how to get the plate to stick to the fins, but perhaps there is some kind of thermally conductive double sided tape or glue/paste. I would probably just glue the fans to the plate overhang, or bend the aluminum into an L shape and bolt them on. Agricultural, to be sure, but should be effective and relatively compact. Will post photos when I tear into it.

http://www.supermicro.com/products/system/1U/5017/SYS-5017GR-TF.cfm

Seems like two of these should work:

http://www.aliexpress.com/store/pro...-Server-Fan-Cooling-Fan/802974_591893376.html

Not sure how to get the plate to stick to the fins, but perhaps there is some kind of thermally conductive double sided tape or glue/paste. I would probably just glue the fans to the plate overhang, or bend the aluminum into an L shape and bolt them on. Agricultural, to be sure, but should be effective and relatively compact. Will post photos when I tear into it.

The problem with that is the 6-pin fan connector. I have no idea how that would be hooked up inside an ATX system. A brief search doesn't show up any adapters or add-on cards.

Psi*

Tech Monkey

I thought about adding fans + a shield across the top. I have a Lian Li case with a squirrel cage blower & considered adding one of those to the end ... with a shield across the top. The blower would not have to be inside the case but could be outside pushing air in. Last could be a couple of 60 mm fans added to & pushing air into the fins.

I decided I didn't like any of those. Although I took the heat sink + heat spreader off this M2090, I think better would have been to keep the heat spreader on & just replace the heat pipe with the water block. The heat pipe heatsink is just for the GPU & fits thru a square opening in the heat spreader. The front/back heat spreader is pressed against the memory chips with thick heat tape & voltage regulators with a thick rubbery like TIM. It will be a bit challenging finding the right stuff to put in their place. Obviously Nvidia was not too concerned with conducting a lot of heat off those devices.

I haven't received the water block as yet to check fit. But I am hoping that it will fit thru the cutout in the heat spreader onto the GPU. The C2070 I have is substantially overclocked & can run at 50% to >90% for many hours. In another thread I have discussed that and I would prefer a similar setup but don't see it happening. Sooo this is why I am looking to water cool this card. I think the external radiator will be much easier to blow the dirt out of ...

I decided I didn't like any of those. Although I took the heat sink + heat spreader off this M2090, I think better would have been to keep the heat spreader on & just replace the heat pipe with the water block. The heat pipe heatsink is just for the GPU & fits thru a square opening in the heat spreader. The front/back heat spreader is pressed against the memory chips with thick heat tape & voltage regulators with a thick rubbery like TIM. It will be a bit challenging finding the right stuff to put in their place. Obviously Nvidia was not too concerned with conducting a lot of heat off those devices.

I haven't received the water block as yet to check fit. But I am hoping that it will fit thru the cutout in the heat spreader onto the GPU. The C2070 I have is substantially overclocked & can run at 50% to >90% for many hours. In another thread I have discussed that and I would prefer a similar setup but don't see it happening. Sooo this is why I am looking to water cool this card. I think the external radiator will be much easier to blow the dirt out of ...

atypicalguy

Obliviot

In that case, I would think any GPU cooler configured for a higher end NVIDIA board would work, e.g.

http://www.coolerguys.com/840556098799.html

Not as elegant as water, but it is probably a bit easier to get up and running in my case.

How does one remove the existing fin assembly (I assume this is what is meant by "pipe") to expose the gpu and attach the new block (of whatever cooler)?

Thanks,

Karl

http://www.coolerguys.com/840556098799.html

Not as elegant as water, but it is probably a bit easier to get up and running in my case.

How does one remove the existing fin assembly (I assume this is what is meant by "pipe") to expose the gpu and attach the new block (of whatever cooler)?

Thanks,

Karl

I -think- that cooler would work no problem, but I can't say for sure; the schematics would need to be looked at. I can see water being easier to install since it's just a block, and little heatsinks can be used for the GDDR, but a full cooler like that might be iffy. It does seem to be raised all around the core, but I can't tell for sure from the pictures shown.

atypicalguy

Obliviot

I talked to one of the cooler guys at the website store. He said he woukd probably stick with existing heat sink/fins and put a pwm fan on it. Looks like the fin planform short axis is about 8cm; could go with two 70mm on the side or one of these (non pwm) blowers on the end: http://www.coolerguys.com/840556094272.html

I need to take a look at case space when I get home. He was not sure about whether the full setup I mentioned above would fit. Probably just run pwm fan mounting screws right between the fins. Anyway a $30 solution, or less, either way.

From a countercurrent thermal exchange standpoint it is probably best to suck from directly over the gpu with inlets at ends.

I need to take a look at case space when I get home. He was not sure about whether the full setup I mentioned above would fit. Probably just run pwm fan mounting screws right between the fins. Anyway a $30 solution, or less, either way.

From a countercurrent thermal exchange standpoint it is probably best to suck from directly over the gpu with inlets at ends.

Hmm, that's an interesting idea. I actually thought of hooking something like a Delta fan to the end and controlling it with a fan controller, but that solution is much more elegant. The 17 CFM rating kind of seems small, but I guess this is a concentrated area, so it should be quite effective - and if so, that's one hell of an inexpensive solution.

atypicalguy

Obliviot

PWM blower

FYI this one has PWM control/ 4 pin plug:

http://www.abmx.com/4pin-pwm-blower-97-x-33-cm-for-114xx-123xx-server-models

It says 97"cm" but that would be kind of big and would not fit into a server, so I think it is a typo and they mean "mm". Power at 1.5A 12V seems like it might not drive a 1meter fan either...

K

FYI this one has PWM control/ 4 pin plug:

http://www.abmx.com/4pin-pwm-blower-97-x-33-cm-for-114xx-123xx-server-models

It says 97"cm" but that would be kind of big and would not fit into a server, so I think it is a typo and they mean "mm". Power at 1.5A 12V seems like it might not drive a 1meter fan either...

K

Last edited:

atypicalguy

Obliviot

cheaper here: http://www.provantage.com/supermicro-fan-0038l4~7SUPM3T8.htm

And on the small format axial fans, this one is loud (59dB-louder than a quiet vacuum cleaner), but 4-pin, rated at 2.61" static pressure (I think this is why they like these counterrotating fans in servers):

http://www.malabs.com/product/CA-FAN01L4

The blower above is only 28dB. Options here:

http://www.supermicro.com/support/resources/Thermal/#FAN

And on the small format axial fans, this one is loud (59dB-louder than a quiet vacuum cleaner), but 4-pin, rated at 2.61" static pressure (I think this is why they like these counterrotating fans in servers):

http://www.malabs.com/product/CA-FAN01L4

The blower above is only 28dB. Options here:

http://www.supermicro.com/support/resources/Thermal/#FAN

Last edited:

Power at 1.5A 12V seems like it might not drive a 1meter fan either...

LOL!

Hmm, 28 dBA isn't too bad at all, if it works well. If you do happen to pick one of these up I'd love to see before / after temperatures. Given there's no airflow at -all- beforehand, it wouldn't surprise me if temps dropped at least 20C.

atypicalguy

Obliviot

photos

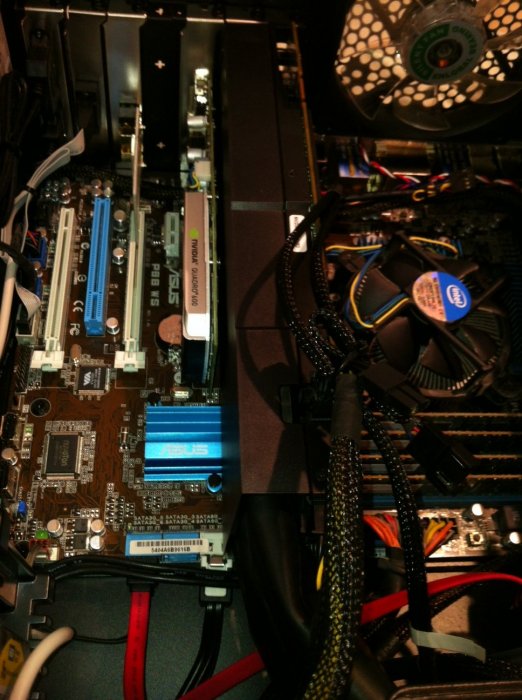

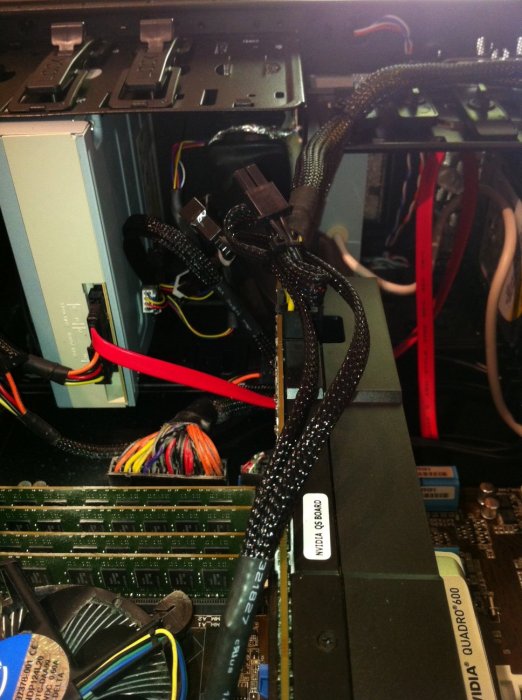

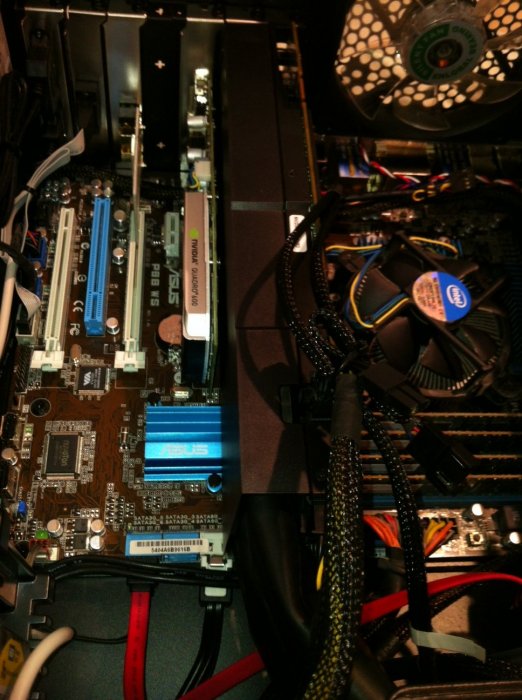

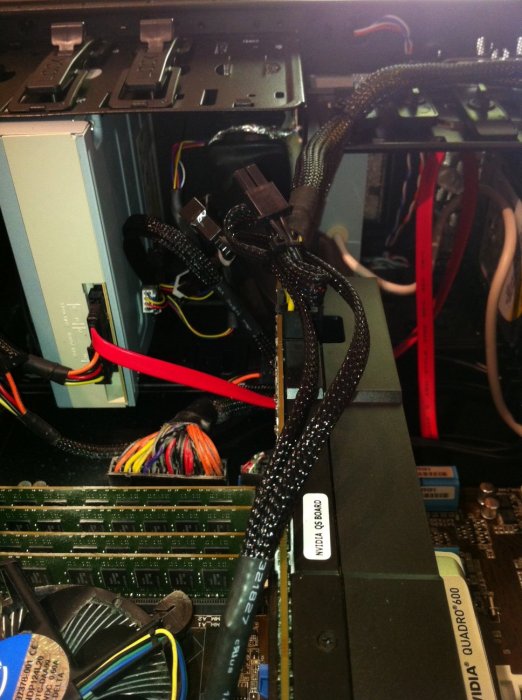

The card I got has a nice plastic case. It looks like a C2075 or similar, but there is no fan. I purchased a mounting plate for it and some little fans from ATACOM, who are fantastic to deal with.

Re: the fans, I bought the sequential counterrotating ones found in servers. They have the highest service pressure of over 2", which I figured would come in handy. But they are also the loudest. Especially when you have two of them. And each one draws 1.8 amps, so you need to run each one from a separate plug on the motherboard. Fortunately I had a couple pwm plugs to spare.

Getting the pwm to shut the fans down is hard; just now they are keyed to the cpu while I try to get Ubuntu to run them from the Gpu temp. But no luck. They seem to spool up just fine with the CUDA samples library so I think it will be fine. Just loud. Really loud. As in "buy-a-different-fan" loud.

I cut two lengths of bike inner tube from a flat I had the other day, stuffed a fan outlet into each one, used double sided carpet tape to stick them to the case in a CD drive slot, sucking in through the front screen. Added a ribbon of tin foil running down the inside of each tube to keep the static from building up. Those ribbons run outside to the case. Then I ran the other end of each tube down into the end vent of the Tesla. Two tube ends fill up the vent hole pretty well. When the fans spool up, there is a bunch of pretty hot air coming out the back GPU vent, so I would say the plan is working well enough.

NVIDIA settings is not giving me the cpu temp on the 2090, so not sure what to tell you. Either one cannot monitor it, and NVIDIA just assume the heat sink can handle reality, or I am not using the correct tool to monitor. It is version 295.41 (I think) of the driver with the latest toolkit for CUDA/SDK etc from their website. The Settings application shows all the details from the 2090, just no temp. I tried enabling Coolbits options 1 and 4 in the etc/.../xorg.conf file, but no dice.

The machine seems to send the proper sorts of jobs to the 2090 rather than the Quadro 600 driving the monitor, so that is nice. It will run in multi gpu mode, but the Quadro is a bit overmatched at this point...I think most of my stuff will be single CPU core feeding the Tesla, which is a waste of a CPU actually, but oh well - whatever saves time. I should be able to run the code with and without the Tesla to compare.

Anyway thanks very much for this thread and for the input. Will post again when I find a better fan, or come up with a way to muffle these. I will probably just put more inner tube on the inlet side to quiet them down a bit as they are about $25/copy.

The card I got has a nice plastic case. It looks like a C2075 or similar, but there is no fan. I purchased a mounting plate for it and some little fans from ATACOM, who are fantastic to deal with.

Re: the fans, I bought the sequential counterrotating ones found in servers. They have the highest service pressure of over 2", which I figured would come in handy. But they are also the loudest. Especially when you have two of them. And each one draws 1.8 amps, so you need to run each one from a separate plug on the motherboard. Fortunately I had a couple pwm plugs to spare.

Getting the pwm to shut the fans down is hard; just now they are keyed to the cpu while I try to get Ubuntu to run them from the Gpu temp. But no luck. They seem to spool up just fine with the CUDA samples library so I think it will be fine. Just loud. Really loud. As in "buy-a-different-fan" loud.

I cut two lengths of bike inner tube from a flat I had the other day, stuffed a fan outlet into each one, used double sided carpet tape to stick them to the case in a CD drive slot, sucking in through the front screen. Added a ribbon of tin foil running down the inside of each tube to keep the static from building up. Those ribbons run outside to the case. Then I ran the other end of each tube down into the end vent of the Tesla. Two tube ends fill up the vent hole pretty well. When the fans spool up, there is a bunch of pretty hot air coming out the back GPU vent, so I would say the plan is working well enough.

NVIDIA settings is not giving me the cpu temp on the 2090, so not sure what to tell you. Either one cannot monitor it, and NVIDIA just assume the heat sink can handle reality, or I am not using the correct tool to monitor. It is version 295.41 (I think) of the driver with the latest toolkit for CUDA/SDK etc from their website. The Settings application shows all the details from the 2090, just no temp. I tried enabling Coolbits options 1 and 4 in the etc/.../xorg.conf file, but no dice.

The machine seems to send the proper sorts of jobs to the 2090 rather than the Quadro 600 driving the monitor, so that is nice. It will run in multi gpu mode, but the Quadro is a bit overmatched at this point...I think most of my stuff will be single CPU core feeding the Tesla, which is a waste of a CPU actually, but oh well - whatever saves time. I should be able to run the code with and without the Tesla to compare.

Anyway thanks very much for this thread and for the input. Will post again when I find a better fan, or come up with a way to muffle these. I will probably just put more inner tube on the inlet side to quiet them down a bit as they are about $25/copy.

Last edited:

Good call with the foil inside the tubes, wouldn't have thought of the static build-up with the rubber. Also, it's not a mod without duck-tape!

With the card itself, the vented holes in the PCB, is there much airflow coming through them? It might be worth blocking the ports off, that way you maximise the amount of air pushed through the card.

In terms of noise, really not a lot you can do. Those fans run loud for a reason, due to the 1U form factor and the huge amounts of air they need to push. If the PWM isn't working as intended, you may need to use either dedicated fan controller with high power output, or fit diodes/resistors to the cables and reduce the voltage.

As for being unable to read temps from the card via software, I'm thinking a third-party app will be required. Now since this is Linux you are dealing with, I'm uncertain as to what's available. A quick search gave me lm_sensors and a front-end xsensors. Though I'm uncertain if they need compiling etc.

As for further improvements to your setup without going water... let's see. First and foremost, I think air filters will be a requirement, just to prevent headaches down the line. I don't know what mounts you are using at the moment, but you could try some vibration reduction with something like these...

http://www.newark.com/qualtek-elect...n-sleeve/dp/21M7149?in_merch=Popular Products

Need to figure out a replacement to the rubber tubing; It's practical to test, but I'm not sure about long-term. Could get some PVC or ABS ducting conduit if you don't need the flexibility. Or a vacuum cleaner hose, preferably one with a smooth interior to minimise drag. There are car radiator pipes too with smooth interiors, but I'm not sure about anti-static qualities.

http://shop.topboats.com/tienda/accesorios-motor/escape-motor/manguera-tubo-escape

You could buy some sheet plastic and glue together a custom duct yourself, depends how far down the DIY hole you want to go. Oh the modding bug, how I miss thee...

With the card itself, the vented holes in the PCB, is there much airflow coming through them? It might be worth blocking the ports off, that way you maximise the amount of air pushed through the card.

In terms of noise, really not a lot you can do. Those fans run loud for a reason, due to the 1U form factor and the huge amounts of air they need to push. If the PWM isn't working as intended, you may need to use either dedicated fan controller with high power output, or fit diodes/resistors to the cables and reduce the voltage.

As for being unable to read temps from the card via software, I'm thinking a third-party app will be required. Now since this is Linux you are dealing with, I'm uncertain as to what's available. A quick search gave me lm_sensors and a front-end xsensors. Though I'm uncertain if they need compiling etc.

As for further improvements to your setup without going water... let's see. First and foremost, I think air filters will be a requirement, just to prevent headaches down the line. I don't know what mounts you are using at the moment, but you could try some vibration reduction with something like these...

http://www.newark.com/qualtek-elect...n-sleeve/dp/21M7149?in_merch=Popular Products

Need to figure out a replacement to the rubber tubing; It's practical to test, but I'm not sure about long-term. Could get some PVC or ABS ducting conduit if you don't need the flexibility. Or a vacuum cleaner hose, preferably one with a smooth interior to minimise drag. There are car radiator pipes too with smooth interiors, but I'm not sure about anti-static qualities.

http://shop.topboats.com/tienda/accesorios-motor/escape-motor/manguera-tubo-escape

You could buy some sheet plastic and glue together a custom duct yourself, depends how far down the DIY hole you want to go. Oh the modding bug, how I miss thee...

atypicalguy

Obliviot

Static dissipation is normal in dust collection systems to prevent fires from static discharge igniting the dust in PVC or other plastic duct/hose, to the extent that tubing is made with internal wires for this purpose. Here it is just to keep from frying the card with static discharge to the cooling fins.

Not much air coming through the card. Plenty of airflow through the long axis of the fins and out the back.

I have found that putting hose on the intake side of the fans quiets them down a bit.

Inner tube seems to be perfect. It would be hard to find other smooth walled tubing that seals as tightly around the fan and provides the requisite flexibility to bend down into the card vent given the space available. Car radiator hose is a great idea though and I'm sure I could find one with the proper bend.

I think the PWM works OK; it is just loud and keyed to the CPU load rather than the GPU load. I would just like them to shut off when the GPU is idle, which it is a lot of the time if not running simulations.

I spent hours last night trying to compile lm-sensors on Ubuntu 10.10 ultimately unsuccessfully, so I will probably just muffle the fan intakes or buy another type of PWM fan if it is still too loud. I do LOVE the concept of tiny counterrotating axial fans, though - the first stage is 17000 rpm and the next stage 13000 rpm max. They hit it according to the motherboard monitor. And two of them together sound a bit like a twin engine aircraft - they are on separate plugs so one gets the interference pattern thrumming from small differences in speed.

Not much air coming through the card. Plenty of airflow through the long axis of the fins and out the back.

I have found that putting hose on the intake side of the fans quiets them down a bit.

Inner tube seems to be perfect. It would be hard to find other smooth walled tubing that seals as tightly around the fan and provides the requisite flexibility to bend down into the card vent given the space available. Car radiator hose is a great idea though and I'm sure I could find one with the proper bend.

I think the PWM works OK; it is just loud and keyed to the CPU load rather than the GPU load. I would just like them to shut off when the GPU is idle, which it is a lot of the time if not running simulations.

I spent hours last night trying to compile lm-sensors on Ubuntu 10.10 ultimately unsuccessfully, so I will probably just muffle the fan intakes or buy another type of PWM fan if it is still too loud. I do LOVE the concept of tiny counterrotating axial fans, though - the first stage is 17000 rpm and the next stage 13000 rpm max. They hit it according to the motherboard monitor. And two of them together sound a bit like a twin engine aircraft - they are on separate plugs so one gets the interference pattern thrumming from small differences in speed.

Good call with the foil inside the tubes, wouldn't have thought of the static build-up with the rubber. Also, it's not a mod without duck-tape!

With the card itself, the vented holes in the PCB, is there much airflow coming through them? It might be worth blocking the ports off, that way you maximise the amount of air pushed through the card.

In terms of noise, really not a lot you can do. Those fans run loud for a reason, due to the 1U form factor and the huge amounts of air they need to push. If the PWM isn't working as intended, you may need to use either dedicated fan controller with high power output, or fit diodes/resistors to the cables and reduce the voltage.

As for being unable to read temps from the card via software, I'm thinking a third-party app will be required. Now since this is Linux you are dealing with, I'm uncertain as to what's available. A quick search gave me lm_sensors and a front-end xsensors. Though I'm uncertain if they need compiling etc.

As for further improvements to your setup without going water... let's see. First and foremost, I think air filters will be a requirement, just to prevent headaches down the line. I don't know what mounts you are using at the moment, but you could try some vibration reduction with something like these...

http://www.newark.com/qualtek-elect...n-sleeve/dp/21M7149?in_merch=Popular Products

Need to figure out a replacement to the rubber tubing; It's practical to test, but I'm not sure about long-term. Could get some PVC or ABS ducting conduit if you don't need the flexibility. Or a vacuum cleaner hose, preferably one with a smooth interior to minimise drag. There are car radiator pipes too with smooth interiors, but I'm not sure about anti-static qualities.

http://shop.topboats.com/tienda/accesorios-motor/escape-motor/manguera-tubo-escape

You could buy some sheet plastic and glue together a custom duct yourself, depends how far down the DIY hole you want to go. Oh the modding bug, how I miss thee...

Psi*

Tech Monkey

In the number-crunch software I use, when the GPU is loaded the host CPU cores run about a total of 25%. When the host CPU is loaded which happens when the problem will not fit in the memory of the GPU, the GPU is 0% utilization ... is at idle in other words.

Also, in the thread starter post, I mentioned that the C2070 that I have idles at ~83 deg C or did until I started using the MSI utility. I could not figure out the Nvidia utility. The point is, is that the GPU always needs some cooling. In the MSI utility a fan speed vs GPU temp control curve can be setup very easily. At 50 deg C the fan is at 50%, 80 deg the fan is at 80%. At 90 deg C it is at 100%. However, the temp never gets above 83 or 84 deg at 100% GPU. At idle it is 61 or 62 deg C with the integrated fan slightly noticeable There are ~15 fans altogether in 3 computers. But now I can be on the phone & no one accuses me of being a beauty salon.

The noise you are experiencing I used to have a few years ago with a few Delta fans in different machines I had at the time. People I phoned from my office thought I was blow drying my hair! NOT!

NOT!

This is what put me on the water cooling path. I have much quieter fans now. On the radiators they are push/pull with filters. I would like to have a little more sophisticated control of the fans in the computers just to slow dust accumulation, but the filters help keep the dust out of the radiators. Elsewhere on this forum I posted a few pics of a totally plugged radiator. Disgusting, I thought Rob was going to ban me for posting those pics!

With Ubuntu you might consider an electronic fan controller like this Zalman. I tried one of these out sometime ago but not that exact one. That link is just the 1st link I just now found for the example. The one I had used 8 thermistors and of course I tried to use all eight just to monitor temps with only 2 or 3 fans being controlled. It worked quite well, but all of that extra wiring made the inside of the case a real rats nest. But, when things warmed up the Deltas would come up to speed very nicely. So I made my phone calls when the CPU were not doing much.

Also, in the thread starter post, I mentioned that the C2070 that I have idles at ~83 deg C or did until I started using the MSI utility. I could not figure out the Nvidia utility. The point is, is that the GPU always needs some cooling. In the MSI utility a fan speed vs GPU temp control curve can be setup very easily. At 50 deg C the fan is at 50%, 80 deg the fan is at 80%. At 90 deg C it is at 100%. However, the temp never gets above 83 or 84 deg at 100% GPU. At idle it is 61 or 62 deg C with the integrated fan slightly noticeable There are ~15 fans altogether in 3 computers. But now I can be on the phone & no one accuses me of being a beauty salon.

The noise you are experiencing I used to have a few years ago with a few Delta fans in different machines I had at the time. People I phoned from my office thought I was blow drying my hair!

This is what put me on the water cooling path. I have much quieter fans now. On the radiators they are push/pull with filters. I would like to have a little more sophisticated control of the fans in the computers just to slow dust accumulation, but the filters help keep the dust out of the radiators. Elsewhere on this forum I posted a few pics of a totally plugged radiator. Disgusting, I thought Rob was going to ban me for posting those pics!

With Ubuntu you might consider an electronic fan controller like this Zalman. I tried one of these out sometime ago but not that exact one. That link is just the 1st link I just now found for the example. The one I had used 8 thermistors and of course I tried to use all eight just to monitor temps with only 2 or 3 fans being controlled. It worked quite well, but all of that extra wiring made the inside of the case a real rats nest. But, when things warmed up the Deltas would come up to speed very nicely. So I made my phone calls when the CPU were not doing much.

Last edited:

DarkStarr

Tech Monkey

Bahahah if you want quiet grab 3 360 rads, a MM case and low low rpm fans say 1500 rpm or so (18 of em) and run push pull at 700 rpm. Get universal blocks for the GPUs and a block for the CPU and then enjoy the silence. I am about to go from a single 360 rad to a 360 and 280. Hopefully it will be even quieter.

atypicalguy

Obliviot

Yes the twin hair dryer sound is growing old rapidly. I understand now why you are committed to water cooling.

Interestingly on the air cooling front, however, I did find these:

http://www.olc-inc.com/CFR2B.html

Not cheap in small quantity, but it produces a nice even flow and has a bracket that attaches directly to the fins. Designed to fit on heat sinks apparently. I plan to put one on the end of the card, plug it in and forget about it. As long as the case internal ambient is low enough it should work fine. Unfortunately the 8-pin power plug will probably interfere so it will have to sit off to one side a bit, but no matter.

Thanks again for the info and thoughts. I may join you in waterworld at some point, but for now am just looking for a simple plug and play option.

Interestingly on the air cooling front, however, I did find these:

http://www.olc-inc.com/CFR2B.html

Not cheap in small quantity, but it produces a nice even flow and has a bracket that attaches directly to the fins. Designed to fit on heat sinks apparently. I plan to put one on the end of the card, plug it in and forget about it. As long as the case internal ambient is low enough it should work fine. Unfortunately the 8-pin power plug will probably interfere so it will have to sit off to one side a bit, but no matter.

Thanks again for the info and thoughts. I may join you in waterworld at some point, but for now am just looking for a simple plug and play option.

In the number-crunch software I use, when the GPU is loaded the host CPU cores run about a total of 25%. When the host CPU is loaded which happens when the problem will not fit in the memory of the GPU, the GPU is 0% utilization ... is at idle in other words.

Also, in the thread starter post, I mentioned that the C2070 that I have idles at ~83 deg C or did until I started using the MSI utility. I could not figure out the Nvidia utility. The point is, is that the GPU always needs some cooling. In the MSI utility a fan speed vs GPU temp control curve can be setup very easily. At 50 deg C the fan is at 50%, 80 deg the fan is at 80%. At 90 deg C it is at 100%. However, the temp never gets above 83 or 84 deg at 100% GPU. At idle it is 61 or 62 deg C with the integrated fan slightly noticeable There are ~15 fans altogether in 3 computers. But now I can be on the phone & no one accuses me of being a beauty salon.

The noise you are experiencing I used to have a few years ago with a few Delta fans in different machines I had at the time. People I phoned from my office thought I was blow drying my hair!NOT!

This is what put me on the water cooling path. I have much quieter fans now. On the radiators they are push/pull with filters. I would like to have a little more sophisticated control of the fans in the computers just to slow dust accumulation, but the filters help keep the dust out of the radiators. Elsewhere on this forum I posted a few pics of a totally plugged radiator. Disgusting, I thought Rob was going to ban me for posting those pics!

With Ubuntu you might consider an electronic fan controller like this Zalman. I tried one of these out sometime ago but not that exact one. That link is just the 1st link I just now found for the example. The one I had used 8 thermistors and of course I tried to use all eight just to monitor temps with only 2 or 3 fans being controlled. It worked quite well, but all of that extra wiring made the inside of the case a real rats nest. But, when things warmed up the Deltas would come up to speed very nicely. So I made my phone calls when the CPU were not doing much.

Psi*

Tech Monkey

Yaaa ... I did squirrel cage fans once upon a time also. Not much air flow.  Those seem a pretty low. I have one that came in a Lian Li case. It does move air ... but only "ok"

Those seem a pretty low. I have one that came in a Lian Li case. It does move air ... but only "ok"

Now you are minding me of all of the stuff that I have thrown away.

Have you considered sealing up the case vents/leaks & having all case fans pushing air in. Therefore, pushing out thru the M2090. Maybe pulling the air would be better? Although if the fans were pushing in, then you could put filters on the fans. This is much like the servers that these cards are normally packaged in.

Now you are minding me of all of the stuff that I have thrown away.

Have you considered sealing up the case vents/leaks & having all case fans pushing air in. Therefore, pushing out thru the M2090. Maybe pulling the air would be better? Although if the fans were pushing in, then you could put filters on the fans. This is much like the servers that these cards are normally packaged in.

atypicalguy

Obliviot

Yes I considered positive case pressure. Basically the 120mm fans do not generate much pressure at all, unless they are high speed, in which case noise is an issue again. All fans would have to be the same, or you would only pressurize to the level of the weakest one, at which point it would just leak backwards.

Good fans pressurizing the case would provide air flow but the servers you mention have fans just like the ones I installed: small and loud. They use those fans because they generate high pressures, and they don't care about the noise in the server farm. Often the fans are deep in the case, right next to the GPU cooling fins. At least this guarantees a certain airflow right where you want it.

Interestingly, I don't think there is a temperature sensor on my board, at least that nvidia-settings will detect. I was reading through some literature today and found this:

Temperature

Readings from temperature sensors on the board. All readings are in

degrees C. Not all products support all reading types. In particular,

products in module form factors that rely on case fans or passive

cooling do not usually provide temperature readings. See below for

restrictions.

GPU Core GPU temperature. For all discrete and S-class

products.

from: http://manpages.ubuntu.com/manpages/natty/man1/alt-nvidia-current-smi.1.html

So I don't think there is a temp sensor output from the board. It's back to thermistors at the exhaust vent for any sort of intelligent temp management. Or just leave a fan on all the time (or water cooler). Water is looking good but I think I will try the squirrel cage next to the unit and measure the outlet temp under load before I take the plunge (pardon the pun).

Good fans pressurizing the case would provide air flow but the servers you mention have fans just like the ones I installed: small and loud. They use those fans because they generate high pressures, and they don't care about the noise in the server farm. Often the fans are deep in the case, right next to the GPU cooling fins. At least this guarantees a certain airflow right where you want it.

Interestingly, I don't think there is a temperature sensor on my board, at least that nvidia-settings will detect. I was reading through some literature today and found this:

Temperature

Readings from temperature sensors on the board. All readings are in

degrees C. Not all products support all reading types. In particular,

products in module form factors that rely on case fans or passive

cooling do not usually provide temperature readings. See below for

restrictions.

GPU Core GPU temperature. For all discrete and S-class

products.

from: http://manpages.ubuntu.com/manpages/natty/man1/alt-nvidia-current-smi.1.html

So I don't think there is a temp sensor output from the board. It's back to thermistors at the exhaust vent for any sort of intelligent temp management. Or just leave a fan on all the time (or water cooler). Water is looking good but I think I will try the squirrel cage next to the unit and measure the outlet temp under load before I take the plunge (pardon the pun).

Last edited:

Psi*

Tech Monkey

Now I remember seeing that also but couldn't find it again when I wanted to confirm.

As I understand it (from sources I am not able to relocate), the M2090 does not have a "C" version with fan like the C2070/C2075 because it exceeds PCIe standards for power requirements. Therefore the absence of certain features are not surprising.

Yet ... just in case ... make sure that you have the latest drivers. Despite the above, I would have thought that temperature sensing would have been integral to the GPU like Intel's CPUs.

The thermistors with electronic fan controllers are pretty small & could be tucked in between the heat pipe tubes as close as possible to the GPU. A couple or 3 there could control fans pretty nicely. I am almost talking myself into trying one of these things again.

As I understand it (from sources I am not able to relocate), the M2090 does not have a "C" version with fan like the C2070/C2075 because it exceeds PCIe standards for power requirements. Therefore the absence of certain features are not surprising.

Yet ... just in case ... make sure that you have the latest drivers. Despite the above, I would have thought that temperature sensing would have been integral to the GPU like Intel's CPUs.

The thermistors with electronic fan controllers are pretty small & could be tucked in between the heat pipe tubes as close as possible to the GPU. A couple or 3 there could control fans pretty nicely. I am almost talking myself into trying one of these things again.