DarkStarr

Tech Monkey

So what do we think of the Titan? I doubt it will stay within that envelope of 250w, I think in actual testing it will end up much closer to 300w+. I gotta say, I dunno how well its gonna sell at that premium and because a lot of people who purchased 680s for launch price are not gonna want to sell for hundreds less to buy new, especially if what they have works fine. That's not to say however some wont but, I imagine the resale vale on the 680s is gonna drop quite a bit. I wonder how well its going to live up to expectations since Nvidia is know for artificially limiting GPU compute, will this card show its true power or will it be more of oh... its basically on par with where the 680 compute should have been (F@H for example). Personally I think for sales, its to late and I don't think in most situations its going to be enough to convince users to upgrade. I do hope however, it puts some pressure on AMD and they step up the Radeons, even if it means my 7970 is outdated

EDIT: WOW! Only a 8+6 Pin setup! That doesn't bode to well for overclocking..... I mean if it is above that 250w that isn't much headroom.

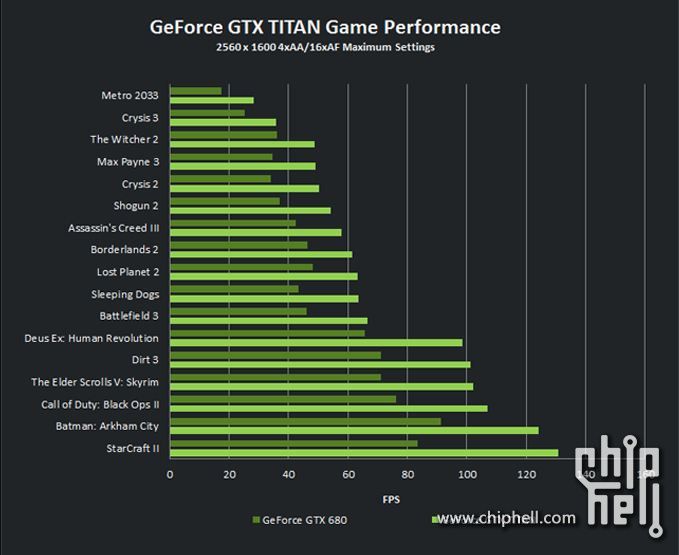

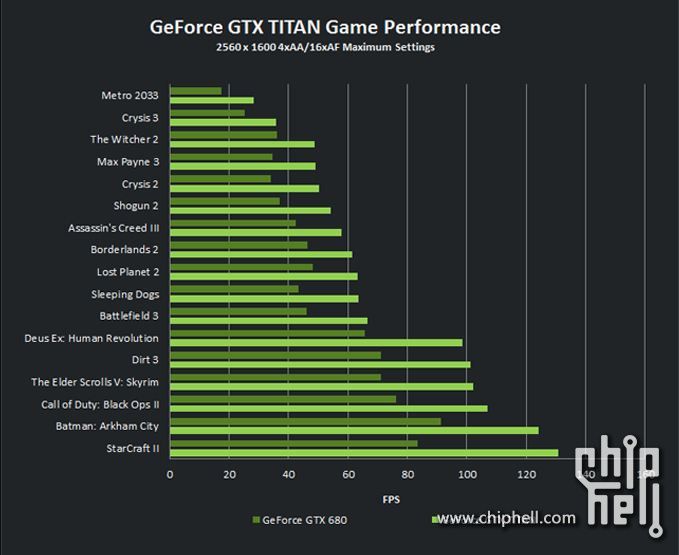

EDIT 2: Neat little graph, which if true is pretty damn impressive.

EDIT: WOW! Only a 8+6 Pin setup! That doesn't bode to well for overclocking..... I mean if it is above that 250w that isn't much headroom.

EDIT 2: Neat little graph, which if true is pretty damn impressive.

Last edited: